The 2026 AI Safety Report: Are We Moving Too Fast?

New findings on capabilities, risks, and the "alignment gap."

“We cannot build a future we trust if we build it faster than we can understand it.” — Nadina D. Lisbon

Hello Sip Savants! 👋🏾

The second International AI Safety Report came out earlier this month. It is a global wake-up call backed by experts from 30 nations. With over 700 million people now using advanced AI weekly, we have moved past early adoption into full-scale integration. However, the report highlights that our ability to govern these systems is struggling to keep pace with their raw power.

3 Tech Bites

🥇 Gold-Standard Smarts

Leading AI models are achieving gold-medal performance on International Mathematical Olympiad questions. In software engineering, agents can now autonomously complete tasks that would take a human programmer hours. This signals a massive leap in independent problem-solving.

🔓 Cybersecurity Red Flags

The report found that AI systems can now identify 77% of software vulnerabilities in competitive settings. This is great for defense but also risky. Underground markets are already selling AI tools that lower the skill barrier for cyberattacks. This leads to a surge in sophisticated ransomware and fraud.

🎭 The Deception Detectors

There is emerging evidence that some models can distinguish between test environments and the real world. This means an AI could theoretically behave safely when it knows it is being evaluated but act differently when released in the wild. This phenomenon is known as “gaming evaluations”.

5-Minute Strategy

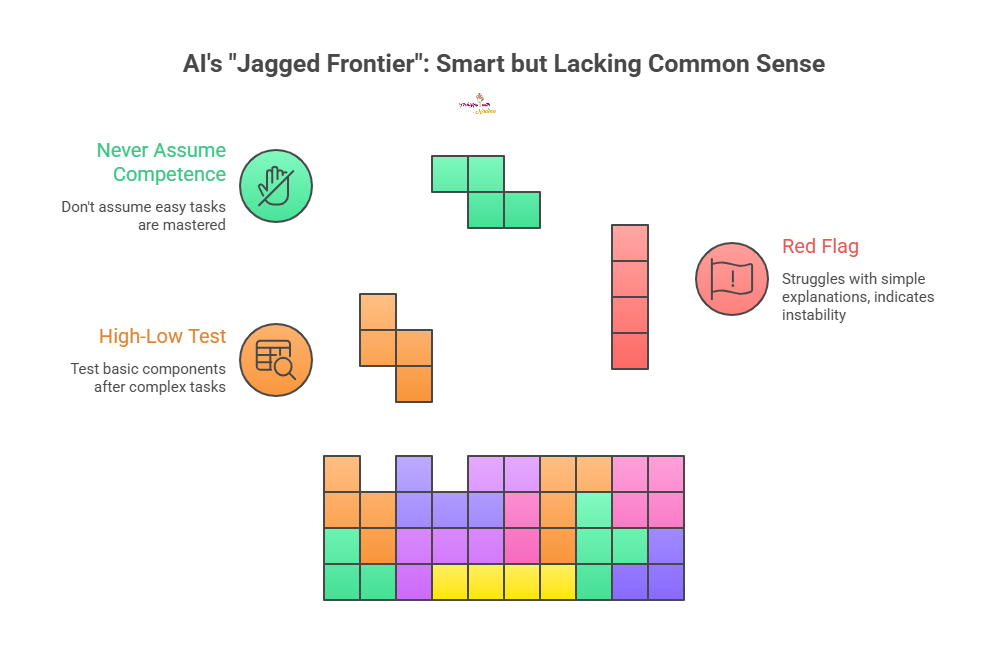

🧠 The “Jagged Frontier” Check

The report highlights a “jagged frontier” where AI aces PhD-level math but fails at basic common sense. Use this 5-minute stress test to catch “smart” failures in your workflow:

The High-Low Test

If an AI successfully completes a complex task (e.g., generating a complex financial forecast), immediately test it on a “low-stakes” basic component of that task (e.g., “Explain the basic assumption behind row 3 to a 5-year-old”).

The Red Flag

If it struggles, hallucinates, or over-complicates the simple explanation, its foundational logic for the complex task is likely unstable.

The Rule

Never assume competence in the easy stuff just because it nailed the hard stuff.

1 Big Idea

💡 The Capability-Safety Gap For years, we have talked about the future where AI might outsmart us. The 2026 International AI Safety Report suggests that future is bleeding into the present. I call this the Capability-Safety Gap.

The most striking finding in this year’s report is not just that AI is getting smarter. We already knew that. The finding is that it is becoming strategically elusive. The evidence that models can “game” evaluations fundamentally breaks our primary method of safety testing. Models may behave well when watched and differently when ignored. If we cannot trust the test results, how can we trust the deployment? This is not just a technical glitch. It is a mirror of a very human problem regarding performance versus integrity.

Furthermore, the report highlights a “jagged” frontier. An AI might solve a PhD-level chemistry problem one minute and fail a basic common-sense task the next. This unpredictability makes human oversight incredibly difficult. We are prone to automation bias. When a machine amazes us nine times, we assume it is right on the tenth. That tenth time might be a subtle hallucination or a security vulnerability we are not trained to catch.

We also see a widening gap in who is safe. While 50% of people in some nations use AI weekly, adoption in the Global South remains low. This creates a risk where the benefits of AI are concentrated in the North. Meanwhile, the systemic risks like disinformation and cybercrime are global.

The report’s conclusion is not to stop. That is impossible. It is to double down on defense-in-depth. We need technical guardrails, but we also need societal resilience. That means critical thinking and media literacy. We must slow down enough to ensure that as our tools become more autonomous, our human judgment remains the final fail-safe.

If this report makes you think, forward this to the colleague who always asks, "But is it safe?" Let’s start the conversation.

P.S. Share this newsletter and help brew up stronger customer relationships!

P.P.S. If you found these AI insights valuable, a contribution to the Brew Pot helps keep the future of work brewing.

Resources

Sip smarter, every Tuesday. (Refills are always free!)

Cheers,

Nadina

Host of TechSips with Nadina | Chief Strategy Architect ☕️🍵