Talking Plants, Doctor Bots & The Ad Era

When AI Mediates Nature, Health, and Commerce

“We are entering the age of the ‘Synthetic Interface’—where we talk to plants to understand nature, and talk to bots to understand our bodies. The challenge isn’t just building smart AI, it’s building smart users.” — Nadina D. Lisbon

Hello Sip Savants! 👋🏾

The “AI Bowl” wasn’t just about sports this week; it was about the fundamental shifting of the digital landscape. While OpenAI officially opened the door to advertisers, researchers in Cambridge opened the door to... talking flowers. It’s a week of stark contrasts: AI is simultaneously becoming a billboard, a medical triage nurse, and a translator for the natural world.

3 Tech Bites

📢 OpenAI Tests Ads in ChatGPT

OpenAI has officially begun testing ads for users on its Free and “Go” tiers in the US [1]. While CEO Sam Altman promises these sponsored results are “visually separate” and won’t influence the AI’s actual answers, the shift is significant. The system uses conversation topics to match ads—so asking for dinner ideas might now serve up a sponsored meal kit. This marks the transition of ChatGPT from a pure utility to a media platform.

🌿 Cambridge’s “Talking Plants”

At the Cambridge University Botanic Garden, a new exhibition uses AI to let visitors have two-way conversations with 20 different plants [2]. Powered by “Nature Perspectives,” plants like “Jade the Vine” and “Titus Junior” (a foul-smelling Titan Arum) have distinct personalities and can answer questions about biodiversity and evolution via text or voice. It’s a “world first” experiment in using AI to build empathy for the environment rather than just efficiency.

📉 The “Human Gap” in AI Medicine

A worrying new study from Oxford University reveals a critical flaw in AI healthcare. While LLMs correctly identified medical conditions in 94.9% of theoretical scenarios, patients using those same tools only identified the correct condition 34.5% of the time [3]. The study suggests the bottleneck isn’t the AI’s intelligence, but the user’s ability to ask the right questions and interpret the data without bias.

5-Minute Strategy

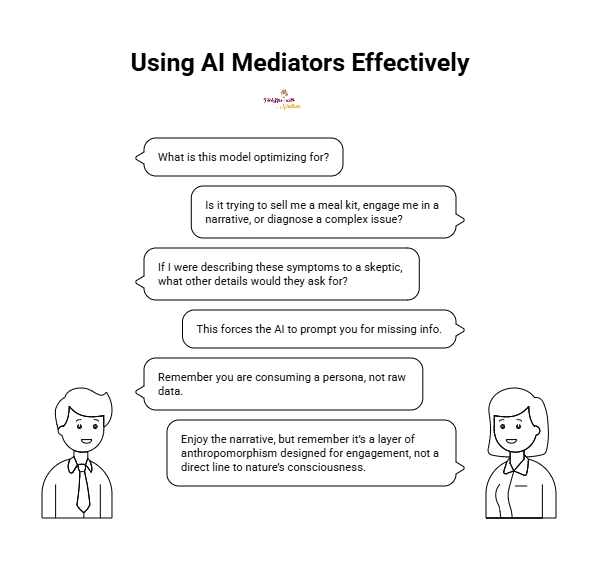

🧠 The “Mediator Check”

Whether you are talking to a bot about a rash, a recipe, or a rhododendron, you are using a mediator. Use this checklist to ensure nothing gets lost in translation.

Identify the Incentive

Before trusting the output, ask:

What is this model optimizing for? (e.g., Is it trying to sell me a meal kit [Ads], engage me in a narrative [Plants], or diagnose a complex issue [Health]?)

The “Rephrase” Test

If using AI for advice (like the Oxford study participants), never accept the first answer. Rephrase your query:

“If I were describing these symptoms to a skeptic, what other details would they ask for?” This forces the AI to prompt you for missing info.

Check the Source

For the “Talking Plants,” remember you are consuming a persona, not raw data. Enjoy the narrative, but remember it’s a layer of anthropomorphism designed for engagement, not a direct line to nature’s consciousness.

1 Big Idea

💡 The Interface is the Message

We often talk about “Model Alignment.” This is the technical challenge of ensuring an AI does what we want. But this week’s news exposes a far more immediate and human problem called User Alignment. We are delegating our perception of reality to machines before we have learned how to properly interrogate them.

We currently face three distinct forms of AI mediation. Each requires a different kind of vigilance.

First is Commercial Mediation. OpenAI’s introduction of ads on it’s Free and “Go” tiers fundamentally alters the texture of the truth. When an AI serves two masters, you and the advertiser, the “answer” becomes a marketplace. The danger isn’t necessarily that the AI will lie. The danger is that the intent of the interface shifts from “assist” to “monetize.” This subtly prioritizes queries that lead to transactions over those that lead to understanding.

Second is Ecological Mediation. This is seen in the Cambridge “Talking Plants” project. It is a fascinating “noble lie.” We are using AI to give vines “sassy” personalities to bridge an empathy gap. But if we only care about nature when it speaks like a human, are we connecting with the environment? Or are we just connecting with a digital simulation of it? It risks training us to value the performance of nature over the reality of it.

Third, and most critical, is Cognitive Mediation. The Oxford study acts as a harsh reality check for the “cyborg” future. It proves that a “super-intelligent” doctor in your pocket is functionally useless if you don’t know how to speak “doctor.” The massive drop in accuracy implies that we are the bottleneck. The study showed a drop from 95% accuracy with AI alone to 34% when humans used the tool. We are passively accepting diagnoses we don’t understand because the interface looks authoritative.

The overarching danger here isn’t that AI will become sentient and conquer us. The danger is that we will become passive consumers of whatever reality the AI presents. This could be a sponsored product, a fictional plant personality, or a medical diagnosis we can’t verify.

The future of digital literacy isn’t just about coding. It is about Contextual Discernment. We must gain the ability to look at an AI’s output and understand why it was generated, who paid for it, and what makes it credible.

If your houseplant could talk, what would it say about your watering habits? Mine would definitely be filing a complaint with HR. 🪴

P.S. Share this newsletter and help brew up stronger customer relationships!

P.P.S. If you found these AI insights valuable, a contribution to the Brew Pot helps keep the future of work brewing.

Resources

Sip smarter, every Tuesday. (Refills are always free!)

Cheers,

Nadina

Host of TechSips with Nadina | Chief Strategy Architect ☕️🍵

Amazing sip like always - was not a huge fan of all the AI commercials during the Superbowl and seems to be to part of the never ending battle to turn a profit with AI instead of furthering humanity with it instead. Leveraging AI to diagnose medical issues seems like an incredible use case that would be great long term if false positives could be reduced and the ability to get the "right" questions presented for diagnosis.