Drawing the Red Line: AI, Authority, and Authorship

From the Pentagon to the local newsroom, who really controls the narrative when AI takes the wheel?

“Technology can process the data, but only humanity can provide the moral compass.” — Nadina D. Lisbon

Hello Sip Savants! 👋🏾

It has been a whirlwind week in the tech world, highlighting just how quickly artificial intelligence is intersecting with our most critical institutions. The Pentagon recently clashed with leading AI firms over the ethical boundaries of military technology, resulting in unprecedented government bans and controversial new defense contracts [1, 2]. Meanwhile, on a local level, traditional journalism is undergoing a radical shift as legacy newspapers hand over the writing process to AI rewrite desks [3]. These current events force us to ask a crucial question: as we delegate more power to algorithms, how do we protect our distinctly human values? Grab your coffee, and let us dive in!

3 Tech Bites

🛑 The Pentagon’s AI Ultimatum

Anthropic CEO Dario Amodei recently refused to cross two ethical “red lines” for the Department of Defense: no AI-powered mass surveillance and no fully autonomous weapons without human oversight. This stand led to the government canceling over $200 million in federal contracts and declaring the company a supply chain risk [1]. It is a stark reminder that corporate values can collide heavily with government demands.

🤝 OpenAI’s Controversial Compromise

While Anthropic walked away, OpenAI stepped in, signing a new cloud-only deployment agreement with the Pentagon [2]. OpenAI claims their contract includes strict technical safeguards and cleared personnel in the loop. However, critics and financial analysts note the agreement essentially aligns with “current laws,” meaning if military policies change, the ethical guardrails could legally shift right along with them [2, 4].

📰 The Robot Reporter

The Cleveland Plain Dealer is now using an AI "rewrite desk" to draft local stories [3]. Reporters submit notes, and generative AI produces the prose, allowing journalists to hit a quota of four stories a day. While editors claim this frees up humans for deeper community reporting, critics worry it risks sacrificing journalistic credibility and the nuanced understanding of local culture [3].

5-Minute Strategy

🧠 The “Lived Experience” Edit

Generative AI is great at predicting the next logical word, but it cannot predict human nuance. Today, practice injecting what algorithms lack: your lived experience. Take five minutes to do this “Friction Check” on your next AI-assisted draft.

Open an email, report, or proposal you recently drafted using an AI tool.

Locate the single most important recommendation or conclusion in the text.

Temporarily delete the AI-generated reasoning supporting that conclusion.

Rewrite that specific justification using only a personal anecdote, a recent team conversation, or a highly specific situational context the AI could never access.

Compare the two versions.

By deliberately replacing algorithmic logic with your own human context, you reclaim authorship. This quick exercise ensures you are not just a passive editor of machine outputs, but the active moral compass of your work.

1 Big Idea

💡 The Illusion of Delegated Responsibility

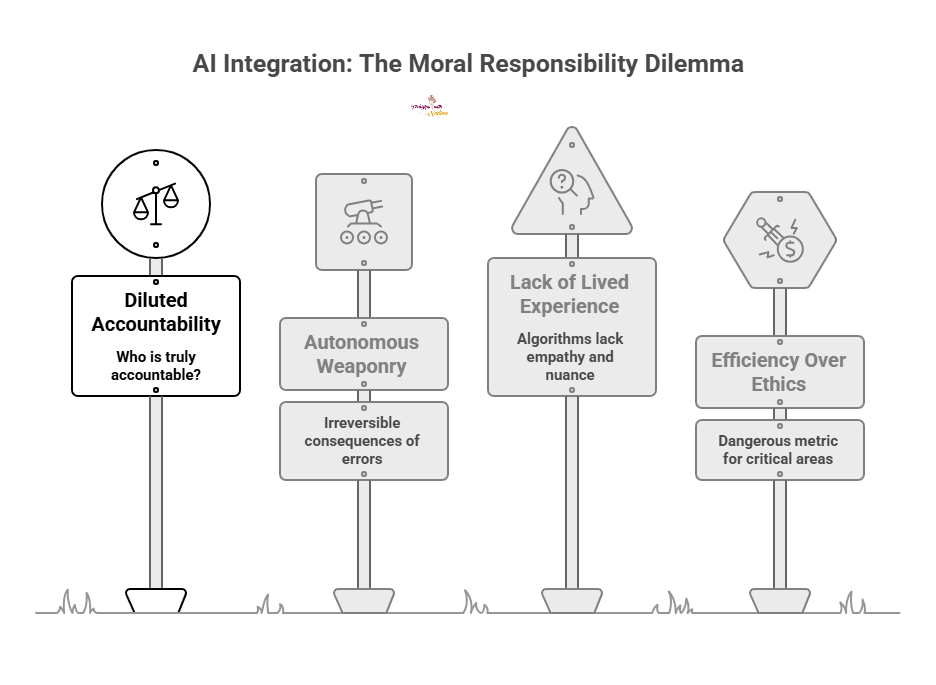

We are standing at a fascinating and perilous crossroads. As AI systems become more capable, the temptation to hand over the reins is growing exponentially. From the battlefield to the newsroom, we are seeing a push to automate complex, high-stakes tasks. But as we integrate these systems into the fabric of society, we have to confront a difficult reality: you can outsource the labor, but you cannot outsource the moral responsibility.

When a local newspaper uses AI to write a story about a community event, the algorithm does not understand the emotional weight of the town’s history [3]. It simply predicts the next logical word. If that article misrepresents a vulnerable local group, who is truly accountable? The reporter who gathered the notes? The editor who skimmed the draft? Or the tech company that built the model? This dilution of accountability is one of the most significant risks we face today.

This issue magnifies on a national scale. The recent standoff between Anthropic and the Pentagon highlights the terrifying potential of autonomous weaponry [1]. If an AI system independently directs a strike based on an algorithmic hallucination, the consequences are irreversible. The insistence on a “human-in-the-loop” is not just a technical preference; it is a fundamental requirement for ethical warfare. OpenAI’s new agreement relies on the premise that current laws will protect us [2, 4], but laws lag behind innovation.

The core problem is that algorithms lack lived experience. They do not feel regret, they do not understand nuance, and they do not possess a conscience. When we remove humans from the decision-making process, we strip away the empathy required to make truly just choices. Efficiency is a fantastic goal for a factory assembly line, but it is a dangerous metric for journalism, justice, and defense.

So, where do we go from here? We must actively choose to stay involved. We have to demand transparency from tech giants and clear regulations from our leaders. More importantly, in our daily lives, we must fiercely protect the spaces where human connection and ethical judgment are non-negotiable. The future of AI should not be about how much we can surrender to machines, but rather how we can use machines to elevate our own humanity.

P.S. Share this newsletter and help brew up stronger customer relationships!

P.P.S. If you found these AI insights valuable, a contribution to the Brew Pot helps keep the future of work brewing.

Resources

Sip smarter, every Tuesday. (Refills are always free!)

Cheers,

Nadina

Host of TechSips with Nadina | Chief Strategy Architect ☕️🍵