Can We Trust Machines to Write Medical History?

Navigating Cochrane’s Bold New Leap into Automated Evidence Synthesis

“Technology should be our stethoscope, a tool that amplifies the pulse of human knowledge, not a replacement for the heart that understands it.” — Nadina D. Lisbon

Hello Sip Savants! 👋🏾

This week, the world of evidence-based medicine was rocked by a pre-print protocol that feels like a scene from a sci-fi thriller, but it is very much our 2026 reality. Cochrane, the gold standard for clinical evidence, has launched the CESAR project [1]. They are not just “testing” AI; they are putting it through a “platform trial,” the same rigorous method used to find COVID-19 vaccines, to see if AI can finally handle the heavy lifting of systematic reviews without losing the human touch.

3 Tech Bites

🧪 The Platform Shift

Unlike static tests, the CESAR study uses an adaptive platform design. This means Cochrane can "fire" underperforming AI tools or "hire" new ones in real-time without stopping the study, ensuring they only use the cream of the crop [2].

🤖 Meet the Contenders

Two tools, Laser AI and Nested Knowledge, were selected from 48 applicants. These are not just chatbots; they are specialized systems being tested on their ability to screen abstracts and extract complex medical data with surgical precision [1].

⚖️ The RAISE Standard

All tools must follow the RAISE principles (Responsible use of AI in Evidence SynthEsis). This framework ensures that "human-centered" does not just mean a person clicks a button, but that humans maintain agency and oversight over every robotic "thought" [3].

5-Minute Strategy

🧠 Applying the CESAR Framework to Your AI Workflow

Cochrane uses a “Platform Trial” approach to ensure AI tools actually deliver. You can apply this same rigor to any tool you use in under five minutes by asking these four specific “CESAR-inspired” questions:

The “Active Control” Test

Compare the AI output to your best manual version of the same task. Is the AI actually faster, or are you spending more time fixing its “hallucinations” than you would have spent doing the work yourself?

The Extraction Audit

Pick one complex data point the AI produced. Can you trace it back to a specific source sentence in under 30 seconds? If the “provenance” is missing, the tool is a risk, not an asset.

The Discordance Check

If you and the AI disagree, why? Use that friction to identify if the AI is missing nuance or if you are showing a human bias.

The “Fireable” Offense

Define one specific error (e.g., missing a key safety warning) that would make you stop using the tool immediately. Having this boundary prevents “automation bias” where we trust the machine blindly.

1 Big Idea

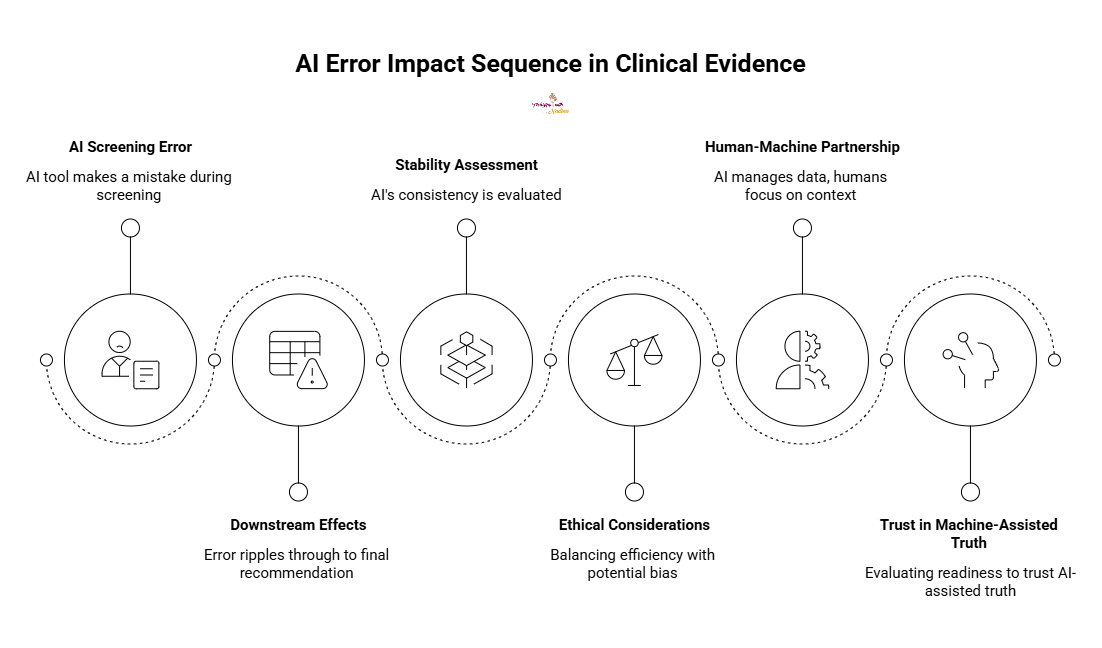

💡 Quantifying the “Butterfly Effect” of AI Errors

Most AI evaluations focus on a simple accuracy score, did the AI get 90% of the questions right? But in the world of clinical evidence, a 10% error rate is not just a statistic; it is a potential public health risk. The most innovative part of Cochrane’s new study is not the AI itself, but its attempt to quantify the downstream effects of errors [1].

If an AI tool makes a mistake during the screening phase, how does that ripple through to the final recommendation a doctor reads? We are moving past the “AI is cool” phase and into the “AI is accountable” phase. This requires us to look at the stability of AI, will it give the same answer twice?

We must grapple with the ethical weight of efficiency. If we can produce medical reviews in days instead of years, we save lives. But if that speed introduces a subtle bias toward certain types of studies, we risk eroding the very trust that makes Cochrane the “gold standard.”

The future of medical truth is not a race between humans and machines; it is a partnership where the machine manages the data deluge so the human can focus on what matters: context, nuance, and empathy. Are we ready to trust the “machine-assisted” truth, or does the human hand need to be on the pen at all times?

AI is moving faster than the peer-review process, how are you keeping your skills sharp? Reply and let me know if you would trust an AI-generated medical summary! I read every response!

P.S. Share this newsletter and help brew up stronger customer relationships!

P.P.S. If you found these AI insights valuable, a contribution to the Brew Pot helps keep the future of work brewing.

Resources

Cochrane Evaluation of (Semi-) Automated Review (CESAR) Methods: Protocol | medRxiv

What makes Cochrane's new AI study innovative? | Cochrane News

How did Cochrane select AI tools to evaluate? | Cochrane News

Sip smarter, every Tuesday. (Refills are always free!)

Cheers,

Nadina

Host of TechSips with Nadina | Chief Strategy Architect ☕️🍵